REFLECTION ARTICLE

HISTORICAL INFLUENCES OF VALIDITY THEORY ON NURSING DIAGNOSIS RESEARCH

Marcos Venícios de Oliveira Lopes1, Viviane Martins da Silva2

1 Universidade Federal do Ceará, Nursing Department. Fortaleza, CE, Brasil. ORCID: 0000-0001-5867-8023. Email: marcos@ufc.br

2 Universidade Federal do Ceará, Nursing Department. Fortaleza, CE, Brasil. ORCID: 0000-0002-8033-8831. Email: viviane.silva@ufc.br

ABSTRACT

Objective: To explore validity theory through a historical approach and analyze its influence on research and the development of nursing diagnoses. Method: A theoretical-reflective study based on bibliographic research was conducted to understand the semantic, epistemological, and methodological evolution of validity theory. Results: The historical development of validity theory is organized into five main phases: beginnings in ancient China, the genesis of the concept, theoretical fragmentation, reunification, and deconstruction. The analysis revealed how this theory has been fundamental in constructing diagnostic structures and research models, while simultaneously being an object of criticism and expansion. Validity theory evolved under the influence of various epistemological currents, including the trinitarian, unified, and argument-based approaches. Conclusion: This study offers a comprehensive and critical view of validity theory, highlighting its implications for the development and use of nursing diagnoses in both theory and practice.

Descriptors: Nursing Diagnosis; Test Validity; Validation Study; Nursing Assessment.

|

How to cite: Lopes MVO, Silva VM. Historical influences of validity theory on nursing diagnosis research. Online Braz J Nurs. 2026;25(1):e20266851. http://doi.org/10.17665/1676-4285.20266851 |

What is already known:

Validity theory has a complex historical development, marked by different epistemological currents and debates regarding its meaning, methods, and applications.

The validation processes for nursing diagnoses have historically been based on classical methods, often removed from contemporary discussions on validity.

Important gaps still exist, especially regarding the understanding of the theoretical and practical implications of diagnostic validity.

What this article adds:

It offers a comprehensive historical analysis of validity theory, evidencing how different phases influenced the development of nursing diagnoses.

It demonstrates that, although with a certain delay compared to psychometrics, nursing has progressively incorporated advanced validation methods, such as causality models, Item Response Theory (IRT), and latent classes.

It highlights the need to deepen the discussion on the meaning and consequences of interpreting diagnostic results, pointing out a critical gap that has yet to be addressed in the field.

INTRODUCTION

The concept of validity constitutes the central foundation for research and practice across various disciplines, functioning as an essential characteristic of the adequacy of inferences drawn from data. In areas such as psychology, education, health sciences, and social sciences, the integrity of results and their applications depend on the solidity of assessments(1). In nursing, interest in validation processes has increased in recent decades, especially in the development of instruments intended to assess patient health status. These processes have been largely based on psychometric methodology and standards established by entities such as the American Psychological Association (APA), the American Educational Research Association (AERA), and the National Council on Measurement in Education (NCME). Although influential, these recommendations have been targets of criticism, fueling a long-standing debate over the concept of validity(2-4).

This debate can be summarized into four essential problems: ontological (the meaning of validity), epistemological (the characteristics of a valid test), methodological (how to investigate validity), and ethical (how to apply the test). Among these, the ontological question—the most fundamental—is the one that has received the least attention in specialized literature(5). Nevertheless, the remaining problems persist without consensus, reflecting different theoretical and methodological positions that have influenced the evolution of the concept.

The term "validity" began to systematically integrate into nursing research as health assessment gained recognition as an essential component of care. Although the use of nursing diagnoses still generates divergence among professionals, it has become imperative to develop rigorous instruments and identify indicators that guide interventions based on results. In this context, validation processes based on psychometrics found fertile ground in nursing, driven by the development of standardized languages that demand methods capable of legitimizing components of classifications and taxonomies.

Meanwhile, in the fields of psychology and education, discussions on validity theory advanced and gave rise to different currents of thought(2,6-7). However, a large portion of nursing research remains distanced from these discussions, employing definitions, methods, and analyses that reflect outdated approaches. In extreme cases, this mismatch can lead to mistaken interpretations of results and compromise the answers to the four previously described problems(5).

In the epistemological field, diagnostic validity has been traditionally linked to the degree to which defining characteristics, when observed in a sufficient number of cases, reflect the reality of the client-environment interaction(8-9). This perspective remains dominant and concentrates efforts on identifying defining characteristics as evidence of diagnostic content. However, few studies address clinical relationships or causality—central elements in contemporary currents that define validity as the causal relationship between the attribute and the test score(10).

In the methodological field, there has been great evolution since the classical models were initially proposed. Recent approaches seek to expand the understanding of the diagnostic process, utilizing diagnostic tests, middle-range theories, and methods that incorporate causality analyses(11-14). Authors have also related diagnostic validity to the concepts of internal and external validity, expanding its methodological operationalization(15).

In the ethical field, although the idea that valid diagnoses must withstand the scrutiny of nurses in clinical practice is reiterated by various authors(13,16-20), the discussion has been restricted to the need for alignment between scientific evidence and professional practice. However, practical implementation still depends excessively on expert opinion and lacks broader debates comparing approaches designed to bridge the gap between theory and practice, such as translational research and middle-range theories.

Defining validity as applied to nursing diagnoses requires, therefore, the understanding of its theoretical evolution and the impact of this trajectory on clinical reasoning. However, this discussion seems to have been abbreviated in the field, possibly due to the accelerated production of knowledge mediated by digital technologies, the search for simplified answers to complex problems, and competition between different approaches. As a consequence, the concept of validity has been frequently employed inadequately, being confused with efficacy, adequacy, or evaluation(21).

Based on these considerations, this article aims to explore the evolution of validity theory, discuss its influence on the development of nursing diagnoses, and offer insights into how its historical context continues to shape research methods on the topic.

HISTORICAL DEVELOPMENT OF VALIDITY THEORY

Beginnings of validity theory

Interest in supposedly valid tests to identify essential characteristics of subjects has roots in ancient China, at least 2,200 years before Christ, when exams were applied to empire officials every three years to verify their functional aptitude. These exams were necessary because government positions were not hereditary, requiring constant selection and evaluation. This system was perfected over time; in 115 BC, for example, candidates were evaluated in six "arts": music, archery, horsemanship, writing, arithmetic, and the rites and ceremonies of public and private life(22).

Written exams were introduced in the Han dynasty (between 200 BC and 200 AD) and reached their final form around the 1370s. At that time, exams consisted of three stages: the first, in the district's main city, lasted a day and a night, with candidates isolated in booths to compose essays and poems (the pass rate was 1% to 7%). In the second stage, those who passed took district exams in the provincial capital during three-day and three-night sessions (pass rate between 1% and 10%). Those selected moved on to specific exams in Beijing; of these, only about 3% became mandarins eligible for public office. Such exams, though rigorous, lacked procedural validity and included excessively severe practices, leading to their abolition in 1906 by royal decree(22-23).

Despite the lack of a direct relationship with the idea of nursing diagnosis validity during this period, the Chinese system influenced European practices. Starting in the 16th century, liberals of the French Revolution, such as Voltaire and Quesnay, advocated for similar practices, which were instituted in 1791, abolished by Napoleon, and restored years later. In the early 19th century, British diplomats also suggested written exams for civil service, with the first implementation occurring in 1833 to select interns for the Indian civil service(22).

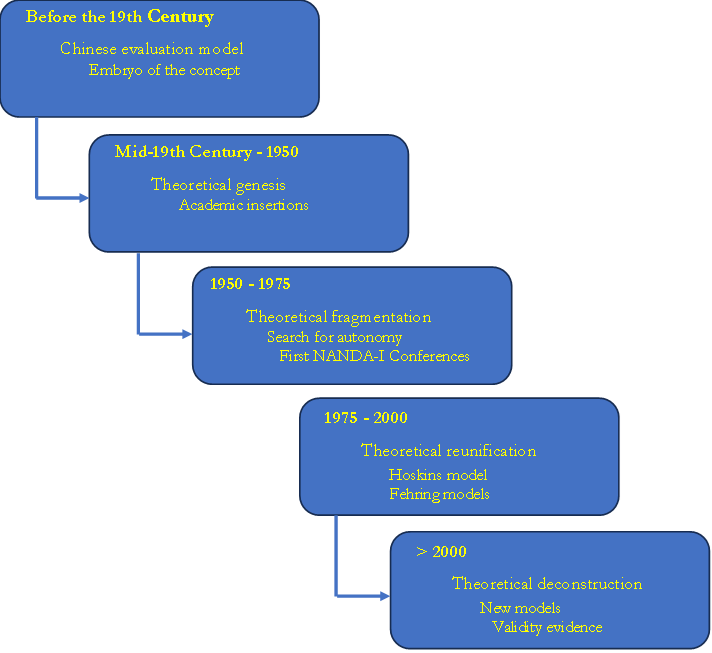

Historians consider that the modern development of validity theory originated in the early 19th century in Europe and the United States. A useful organization of this trajectory divides the evolution into four periods: 1) the genesis of validity theory (mid-1800s to 1951), 2) the fragmentation of validity (1952 to 1974), 3) the reunification of validity (1975 to 1999), and 4) the deconstruction of validity (2000 to 2012)(24). Although the text lacks a critical analysis of this history, the main facts that marked the development of validity theory are well described(25). A summary of this evolution, adapted to the context of nursing diagnoses, can be viewed in Figure 1.

Figure 1 - Summary of the historical evolution of validity theory applied to the context of nursing diagnoses. Fortaleza, CE, Brazil, 2024

Source: prepared by the authors, 2024.

The genesis of validity theory

The first period in the history of validity can be divided into two moments(24): a gestational period, extending from the mid-19th century to 1920, and a crystallization period, between 1921 and 1951. The gestational period was marked by growing interest in Europe and the United States in developing structured exams for school admission, based on the belief that structured tests would increase assessment accuracy and produce fairer decisions. In the United Kingdom, the University of London was founded in 1836 to regulate the exams of its units, and in 1871, it began accepting results from other institutions. Oxford and Cambridge adopted entrance exams in 1857 and 1858, which encouraged improvements in secondary education. In the United States, written exams were introduced in Massachusetts in 1845 for high school certification, later expanding to professional selection.

In this context, statistical techniques applied to the study of heredity were developed(26), and an empirical approach to validation was proposed, suggesting the comparison of measures with independent estimates of human capacities to identify the most informative ones(27). British researchers expanded these ideas by developing correlation methods applicable to psychological attributes(28). The correlation coefficient then became the primary statistical tool in validation studies.

Parallel to this, efforts to understand the human mind led French researchers, between 1905 and 1911, to develop tests with progressive levels of difficulty to identify mental retardation in children(22). These instruments spread rapidly. Intelligence tests were also applied to more than 1.5 million American recruits during World War I. Enthusiasm for psychological testing was so significant that it was claimed most "civilized" countries already possessed vocational guidance institutions with trained psychologists(29).

However, by the early 20th century, American scholars began questioning whether structured exams were truly as accurate as believed, highlighting the subjectivity inherent in judgments. In response, the development of instruments with less subjectivity intensified, turning to true/false, multiple-choice, recall, and completion items(30-31). This change fostered the search for objective methods and explains why validity theory initially emerged in the United States(24). The period also saw the proliferation of standardized tests in professional practice and scientific research on intelligence and personality. During this phase, the correlation coefficient was consolidated as central to assessing test quality.

In the transition to the crystallization period, the North American National Association of Directors of Educational Research sought to establish consensus on terms and procedures. It is at this moment that the inaugural definition of validity emerges: the degree to which a test measures what it is supposed to measure(32). A few years later, this wording was slightly modified, consolidating the so-called classical definition: the degree to which a test measures what it purports to measure(33). This definition guided psychometric thinking for decades and influenced subsequent formulations adopted in the field of nursing diagnoses.

From this definition, the need arose to determine how to empirically verify validity. Two initial approaches were proposed: logical content analysis and empirical evidence by correlation—the latter considered the most robust at the time(34). The central challenge consisted of defining appropriate criteria for comparison(35-37). Recommendations included expert judgment, comparison between reduced and full versions of a test, and longitudinal analysis. However, the validity of the criteria themselves remained difficult to demonstrate(38).

Simultaneously, education scholars questioned the exclusive centrality of statistics. They argued that a test could be considered valid if experts, through logical analysis, judged its content consistent with the curriculum(38). It was then proposed that expert judgment should complement empirical approaches(36). The division between criterion-based validity and content-based validity intensified. Although the literature highlights the predominance of the empirical approach, the period also valued content, including the purpose of the instrument and so-called "face validity"(39).

Despite the attention to content, the pre-1950 period is described as a phase oriented toward "prediction"(40-41). The emphasis on empirical criterion methods led to the gradual degradation of the concept of validity, which began to be confused with "validation procedures." While these refer to methods of obtaining data, validity corresponds to the sustainability of the produced interpretations. Consequently, instructional works reduced validity to the idea of an empirical correlation coefficient(24).

In nursing, scientific production at the end of the 19th and beginning of the 20th century was incipient; no articles on validity or testing were found in PubMed and Scopus databases during this period. The first references appear in the 1940s in studies conducted by psychologists on personality tests applied to nurses(42-43). The first study published by nurses directly related to validity theory dates to the late 1950s, describing an empirical approach to identifying characteristics of career success among students(44). Thus, only in later periods did validity theory integrate into the profession's themes. At this stage, the idea of nursing diagnoses was embryonic, and discussions about their validation were nonexistent, although interest in clinical assessment strategies was beginning to emerge in the academic sphere.

The fragmentation of validity

In the early 1950s, the APA, NCME, and AERA published new Standards for psychological testing. Under the influence of Lee Cronbach, the document systematized the tendency to classify validity into distinct types, based on the distinction between logical and empirical validity(45). In 1952, the APA proposed four types: content, predictive, status, and congruent(46). The final 1954 version maintained the four but renamed them: status became concurrent validity, and congruent became construct validity(47). The latter was applied when direct logical or empirical analyses were insufficient.

In a seminal article, it was highlighted that construct validity is essentially scientific, requiring the definition of the theoretical attribute responsible for performance(48). The most significant contribution was the defense that validity relates to the interpretations of scores, which must be consistent with a nomological network (a system of laws linking theoretical terms to observable indicators)(5).

The 1966 revision of the Standards unified concurrent and predictive validity under the term criterion validity, leaving three recognized types—a structure maintained in 1974(49). A perception (now considered mistaken) then consolidated that these types were alternative conceptions of validity rather than categories of evidence. Construct validity acquired a central position, although criterion studies continued to proliferate.

From 1960 onwards, validation studies conducted by nurses multiplied, focusing on pediatric care(50-52), professional performance(53-58), technical knowledge(59), and job satisfaction(60). Interest focused on aptitudes and competencies, in line with the tradition of criterion validity. Since the first task force for the classification of nursing diagnoses would only emerge in the 1970s, there were not yet validation processes directed toward this end. The fragmented concept of validity would be incorporated by nursing only between the late 1970s and early 1980s.

The reunification of validity

In the mid-1970s, the interest of validity theory scholars shifted to the process of construct validation. This movement defended the superiority of construct validity over other types(48) and emphasized that one does not validate a test, but rather the interpretations derived from the data produced by specific procedures, shifting the focus of validity from the instrument to the inferences associated with the measurement process(61). These conceptions prepared the ground for the development of a unified approach to validity theory.

The formal start of this change occurred in 1974, when APA, AERA, and NCME presented a new revision of the Standards. From this milestone, two currents began to predominate: the trinitarian conception, according to which the three types of validity were equally important, and the unitary conception, centered exclusively on construct validity(38).

Samuel Messick played a fundamental role in this debate by expanding the ideas of other scholars such as Gulliksen and Loevinger. For him, all validity should be understood as construct validity, and his most significant contribution was to reposition measurement as a central element in all contexts of validity, challenging the previous view that separated validity for measurement and validity for prediction(62).

Advocates of the unitary approach argued that validity should refer only to the inferences derived from the test, rejecting content validity as an independent type, as it concerned only the universe of items(38). They also defended that criterion validity evidence was insufficient, as it depended on external criteria that would likewise need to be validated. Thus, criterion validity would not represent a type of validity, but only one of many sources of evidence for the construct validation process(62). Furthermore, it was argued that different forms of evidence did not mean different types of validity(63-64).

Validity was then redefined as an integrated evaluative judgment on the degree to which empirical evidence and theoretical rationales support the adequacy and relevance of inferences and actions derived from test results(65). It is also highlighted that a central element of this definition was the introduction of the social consequences of interpretations(66). Thus, the unitary approach incorporated relevance, utility, values, and social impacts—elements already mentioned previously but never integrated into the concept of validity. Paradoxically, the attempt at simplification produced a more complex model and a target for criticism.

Criticisms of the unitary model concentrated on the difficulty of operationalizing it in the applied field. Many authors argued that expanding the concept to include social consequences led to conceptual disorganization and generated more confusion than clarity, which led to the recommendation to restrict the concept of validity and began a deconstruction of the unitary approach(67-68).

In nursing, the period between 1970 and 1990 showed a gradual growth of interest in validity. Although a moderate increase in publications was observed between the mid-1970s and the end of the 1980s, the most significant expansion occurred in the 1990s. The first six articles on the validation of nursing diagnoses were published in 1985, almost all in volume 20(4) of Nursing Clinics of North America(69-74). The exception was an article published in Orthopaedic Nursing on the validation of the diagnosis "Altered body image"(75).

In the 1970s, the main concern of the community dedicated to nursing diagnoses was defining the concept itself and establishing an initial taxonomic structure. The first National Conference on Classification of Nursing Diagnoses occurred in 1973 at Saint Louis University and was based on inductive and deductive approaches to develop diagnoses(76). Although the inductive approach included clinical validation, the meaning of this stage was not detailed. Part of the approach was influenced by set theory, Venn diagrams, and Boolean algebra to classify clinical entities(77). At this conference, 30 primary diagnoses and 100 diagnostic categories were listed, differentiated by etiology and duration; however, defining characteristics were described only for the main diagnoses and 14 categories, reflecting the absence of intention to create a structured taxonomy, but only to identify possible clinical problems(76).

It was only in 1979 that the first methodological validation model for nursing diagnoses emerged(78), focused on identifying defining characteristics and evidence supporting the existence of the diagnosis. However, the model reflected a conception of validity aligned with the early phases of psychometric theory—logical analysis and empirical evidence—and ignored the advances of unified validity theory. There was also conceptual confusion, such as the association of validity with the degree of agreement between nurses.

In 1982, the North American Nursing Diagnosis Association (NANDA) was founded, which began collaborating with the American Nursing Association (ANA). An attempt to include NANDA diagnoses in the International Classification of Diseases (ICD) in 1986 was rejected for lack of international representativeness. Taxonomy I was published in 1987, and the following year, efforts began to expand international participation(79).

During the 1980s, studies on nursing diagnosis validation intensified. Diagnostic validity was defined as dependent on the simultaneous occurrence of clinical cues and the congruence between cues and diagnoses in large samples, and NANDA's empiricism was criticized by arguing that validations based only on expert opinion were insufficient(19). This criticism referred to the crystallization period of validity theory, marked by the tension between logical and empirical analyses.

Two sources of evidence for validation were proposed: expert and clinical(13). This was essentially a logical analysis based on expert opinion and an empirical analysis similar to concurrent validity, using a weighted average of diagnostic assessments as a criterion. In 1989, a three-phase model was presented: concept analysis, expert validation, and clinical validation, reflecting psychometric theory discussions at the end of fragmentation(80).

In the mid-1990s, criticisms of previous models argued that one of the main weaknesses was the lack of adequate prior theoretical conceptualization(80-81). This movement brought nursing closer to the debate on the reunification of validity theory, even though in psychometrics, construct validity was gaining centrality and the concept was expanding to include ethical and social dimensions.

Thus, this period marks the first effective movements in the search for methods to guide the construction and validation processes of nursing diagnoses. However, these movements seemed to incorporate not only current ideas from that period but also structures and phases typical of earlier periods, producing a mix of distinct interpretations of what the validity of a nursing diagnosis would be.

The deconstruction of validity

Between the 1990s and the early 2000s, a new methodology was developed to provide a foundation for validation practice based on argumentation. Unlike the current that conceived validity as an evaluative judgment sustained by evidence, this new proposal described how such evidence should be organized into a coherent validity argument(40,82-83). The approach proposed that the validation process should begin with the definition of the interpretation and intended use of test scores; follow with the explicitation of claims that would support this interpretation in the form of a structured argument and by testing its assumptions; and finish with the judgment of the argument in terms of coherence, completeness, and plausibility(66).

Throughout the 2000s, this approach was deepened and relied on Cronbach and Meehl's notion of a nomological network, but it distanced itself from Messick's unified conception. Its main criticism of Cronbach and Messick's proposals was the so-called "endless search for validity," since those authors linked validity to continuous scientific investigation into the meaning of scores, dependent on theories relating a construct to an extensive network of other constructs(24). In opposition, the new approach argued that validation would depend exclusively on the interpretation and use of results that the test user has in mind(42). Thus, for tests targeting relatively simple attributes, only a limited set of evidence and analyses would be sufficient.

To sustain this perspective, a distinction was established between observable attributes and theoretical constructs(83). Interpretations based on constructs would require broad scientific investigation, typical of traditional construct validation, while interpretations associated with observable attributes, such as mathematical proficiency or vocabulary knowledge, would be more manageable from a methodological standpoint. In this way, the new methodology decomposed and simplified the concept of validity and reduced the central place of theoretical constructs.

Other authors also criticized the unified model. For example, the inclusion of ethical aspects in the concept of validation was rejected, considering that scientific analyses and ethical analyses should remain in distinct spheres, otherwise accentuating the dissociation between theory and practice of validation(84). Another even more radical criticism of the conception of validity adopted for decades by the APA, AERA, and NCME argued that validity should be conceived solely as a property of tests, returning to the classical definition(3).

From the early 2000s until the time this text was written, a significant growth in the number of publications on validity theory and validation processes was observed. Among psychometricians, intense debates persist, with defenders of trinitarian, unitary, argumentative currents, or alternative proposals. In 2012, the journal Measurement dedicated a special issue to discussing a proposal to clarify the consensual definition of validity presented in 1999 by AERA, APA, and NCME(85). The participation of various specialists revealed a wide range of favorable and contrary positions, highlighting the difficulty of reaching a consensus on the concept of validity.

In nursing, this recent period follows a similar movement, with a progressive increase in interest in the topic. Between 2000 and 2023, a search in the Scopus database with the strategy ("Validity" OR "Validation") AND "Nursing" identified 7,574 publications in the area, with a clearly upward trend, reaching 574 articles in 2023. Of these, 378 included the term nursing diagnosis, also with an upward trend, reaching 31 articles on nursing diagnosis validation in 2021. The sharpest increase in these studies occurred from 2015 onwards, when more than 20 articles were published annually.

In this context, new approaches for the validation of nursing diagnoses were proposed. In 1989, NANDA promoted a meeting with specialists in research methods and nursing diagnoses to discuss the use of quantitative and qualitative methodologies in the validation process(86). Among the works presented, only six articles explicitly defined the term validity; three used the classical definition from the 1920s, even though cited from contemporary references(87-90). Two other articles relied on old models and a conception of validity aligned with the fragmentation period(91-92). This suggests that the concept of validity applied to nursing diagnoses was developing at an earlier stage than the validity theory discussed in psychometrics.

Different qualitative and quantitative methods supposedly applicable to nursing diagnosis validation were proposed and included grounded theory(93-94), ethnography and phenomenology(92), concept analysis(95), triangulation(88), epidemiological methods(96), and classical multivariate techniques(87,89-90,97). Despite the wide methodological variety pointed out at this meeting, nursing diagnosis validation studies remained, to a large extent, centered on the approach of the 1990s(13). Only from the 2000s onwards did Latin American groups begin to adopt differentiated strategies, such as the use of diagnostic tests based on a panel of judges(11), modeling by item response theory(98), latent class analysis(14), and development of middle-range theories(12).

This period denotes the search for an ontology and an epistemology for the validity theory of nursing diagnoses. This process seems to be under development and being influenced by new information technologies, including artificial intelligence and the development of electronic health record systems. This historical evolution instills the need for theoretical and methodological improvement by researchers in the area with the aim of revising and proposing diagnostic structures that allow for an adequate and coherent interpretation of the human responses expressed by those in need of nursing care. In this sense, it is observed that, although temporally out of step with the evolution of psychometric validity theory, nursing diagnosis validation processes have developed progressively, incorporating more sophisticated methods. However, an important gap persists: an in-depth discussion on the meaning of the results produced in these processes.

CONCLUSION

Even though nursing has progressively advanced in incorporating more sophisticated methods, a conceptual mismatch persists in relation to contemporary discussions on validity, especially regarding the interpretation of diagnostic results and their consequences. Given this, it is recommended that researchers explain with greater rigor the theoretical assumptions guiding their validation studies, clearly define the intended interpretations and uses of the diagnoses, and organize empirical evidence coherently with these objectives. In the scope of teaching, it is fundamental to expand the teaching of nursing diagnoses beyond the reproduction of classificatory structures, incorporating the historical evolution of methods and their implications for clinical reasoning and decision-making.

CONFLICT OF INTERESTS

The authors declare no conflict of interests.

FUNDING

This work was carried out with the support of the National Council for Scientific and Technological Development (CNPq). Processes No. 310378/2023-0 and 313495/2021-1.

REFERENCES

1. Fabrigar LR, Wegener DT, Petty RE. A Validity-Based Framework for Understanding Replication in Psychology. Pers Soc Psychol Rev. 2020;24(4):316-344. https://doi.org/10.1177/1088868320931366. PMID: 32715894.

2. Borsboom D, Markus KA. Truth and Evidence in Validity Theory. J Educ Meas. 2013;50(1):110-114. https://doi.org/10.1111/jedm.12006.

3. Borsboom D, Mellenbergh GJ, van Heerden J. The Concept of Validity. Psychol Rev. 2004;111(4):1061-1071. https://doi.org/10.1037/0033-295x.111.4.1061. PMID: 15482073.

4. Sireci S, Faulkner-Bond M. Validity evidence based on test content. Psicothema. 2014;1(26):100-107. https://doi.org/10.7334/psicothema2013.256. PMID: 24444737.

5. Pasquali L. Validade dos testes psicológicos: será possível reencontrar o caminho?. Psic., Teor. Pesq. (Online). 2007;23(spe):99-107. https://doi.org/10.1590/s0102-37722007000500019.

6. Markus KA, Borsboom D. Frontiers of test validity theory: measurement, causation, and meaning. London: Routledge; 2013.

7. Pasquali L. Psicometria: teoria dos testes na psicologia e na educação. 4th ed. Petrópolis: Vozes; 2004.

8. Gordon M. Nursing diagnosis: process and application. 3rd ed. St Louis: Mosby; 1993.

9. Gordon M, Sweeney MA. Methodological Problems and Issues in Identifying and Standardizing Nursing Diagnoses. ANS Adv Nurs Sci. 1979;2(1):1-16. https://doi.org/10.1097/00012272-197910000-00002. PMID: 114094.

10. Borsboom D, Wijsen LD. Frankenstein’s validity monster: the value of keeping politics and science separated. Assess Educ. 2016;23(2):281-283. https://doi.org/10.1080/0969594x.2016.1141750.

11. Lopes MV de O, Silva VM da, Araujo TL de. Methods for Establishing the Accuracy of Clinical Indicators in Predicting Nursing Diagnoses. Int J Nurs Knowl. 2012;23(3):134-139. https://doi.org/10.1111/j.2047-3095.2012.01213.x. PMID: 23043652.

12. Lopes MV de O, Silva VM da, Herdman TH. Causation and Validation of Nursing Diagnoses: A Middle Range Theory. Int J Nurs Knowl. 2017;28(1):53-59. https://doi.org/10.1111/2047-3095.12104. PMID: 26095430.

13. Fehring RJ. Methods to validate nursing diagnoses. Heart Lung. 1987;16(6 Pt 1):625-629. PMID: 3679856.

14. Lopes MV de O, Silva VM da. Métodos avançados de validação de diagnósticos de enfermagem. In: Herdman TH, Napoleão AA, Lopes CT, Silva VM da, editors. PRONANDA: Programa de atualização em Diagnósticos de Enfermagem. Ciclo 4, v. 3. Porto Alegre: Artmed Panamericana; 2016. p. 31-74.

15. Creason NS. Clinical Validation of Nursing Diagnoses. Int J Nurs Terminol Classif. 2004;15(4):123-132. https://doi.org/10.1111/j.1744-618x.2004.tb00009.x. PMID: 15712860.

16. Lopes MV de O, Silva VM da, Araujo TL. Métodos de pesquisa para validação clínica de conceitos diagnósticos. In: Herdman TH, editor. PRONANDA: Programa de atualização em Diagnósticos de Enfermagem. Conceitos básicos. Porto Alegre: Artmed Panamericana; 2013. p. 87-132.

17. Lunney M. Critical Thinking and Accuracy of Nurses' Diagnoses. Int J Nurs Terminol Classif. 2003;14(3):96-107. https://doi.org/10.1111/j.1744-618x.2003.00096.x. PMID: 14649031.

18. Mazalová L, Marečková J. Types of validity in the research of NANDA International components. Profese online. 2012;5(2):11-15. https://doi.org/10.5507/pol.2012.011.

19. Tanner CA, Hughes AM. Nursing diagnosis: issues in clinical practice research. Top Clin Nurs. 1984;5(4):30-38. PMID: 6558994

20. Whitley GG. Processes and Methodologies for Research Validation of Nursing Diagnoses. Int J Nurs Terminol Classif. 1999;10(1):5-14. https://doi.org/10.1111/j.1744-618x.1999.tb00016.x. PMID: 10358519.

21. Haig BD. Construct Validation and Clinical Assessment. Behav Change. 1999;16(1):64-73. https://doi.org/10.1375/bech.16.1.64.

22. DuBois PH. A history of psychological testing. Boston: Allyn and Bacon; 1970.

23. Gregory RJ. The history of psychological testing. In: Gregory RJ, editor. Psychological testing: history, principles, and applications. 6th ed. London: Pearson Education; 2010. p. 56-81.

24. Newton PE, Shaw SD. Validity in educational & psychological assessment. London: Sage; 2014.

25. Borsboom D. Zen and the art of validity theory. Assess Educ. 2016;23(3):415-421. https://doi.org/10.1080/0969594x.2015.1073479.

26. Galton F. Hereditary genius. London: Macmillan and Co.; 1869.

27. Cattell JM. V.—Mental Tests and Measurements. Mind. 1890;15(59):373-381. https://doi.org/10.1093/mind/os-xv.59.373.

28. Spearman C. “General Intelligence,” Objectively Determined and Measured. Am J Psychol. 1904;15(2): 201-292. https://doi.org/10.2307/1412107.

29. Burt CL. Historical sketch of the development of psychological tests. In: Board of Education, editor. Report of the consultative committee on psychological tests of educable capacity and their possible use in the public system of education. London: His Majesty’s Stationery Office; 1924. p. 1-61.

30. Meyer M. The Grading of Students. Science. 1908;28(712):243-250. https://doi.org/10.1126/science.28.712.243. PMID: 17820846.

31. Ruch GM. The objective or new-type examination: an introduction to educational measurement. Chicago: Scott, Foresman and Company; 1929.

32. Buckingham BR. Intelligence and its measurement: A symposium--XIV. J Educ Psychol. 1921;12(5):271-275. https://doi.org/10.1037/h0066019.

33. Ruch GM. The improvement of the written examination. Chicago: Scott, Foresman; 1924.

34. Beckstead JW. Content validity is naught. Int J Nurs Stud. 2009;46(9):1274-1283. https://doi.org/10.1016/j.ijnurstu.2009.04.014. PMID: 19486976.

35. Bingham WV. Aptitudes and aptitude testing. New York: Harper & Bros; 1937.

36. Kelley TL. Interpretation of educational measurements. New York: Macmillan; 1927.

37. Thurstone LL. The reliability and validity of tests: derivation and interpretation of fundamental formulae concerned with reliability and validity of tests and illustrative problems. Ann Arbor, Mich.: Edwards Brothers; 1932.

38. Sireci SG. The Construct of Content Validity. Soc Indic Res. 1998;45(1-3):83-117. https://doi.org/10.1023/a:1006985528729.

39. Rulon PJ. On the validity of educational tests. Harv Educ Rev. 1946;16:290-296.

40. Kane MT. Current Concerns in Validity Theory. J Educ Meas. 2001;38(4):319-342. https://doi.org/10.1111/j.1745-3984.2001.tb01130.x.

41. Shepard LA. Chapter 9: Evaluating Test Validity. Rev Res Educ. 1993;19(1):405-450. https://doi.org/10.3102/0091732x019001405.

42. Bennett GK, Gordon HP. Personality test scores and success in the field of nursing. J Appl Psychol. 1944;28(3):267-278. https://doi.org/10.1037/h0056001.

43. Gordon HP. Study Habits Inventory scores and scholarship. J Appl Psychol. 1941;25(1):101-107. https://doi.org/10.1037/h0058849.

44. Hawkins NG. A Measure of Empirical Validity. Nurs Res. 1959;8(1):13-17. https://doi.org/10.1097/00006199-195908010-00004.

45. Cronbach LJ. Essentials of psychological testing. New York: Harper & Brothers; 1949.

46. American Psychological Association. Committee on Test Standards. Technical recommendations for psychological tests and diagnostic techniques: preliminary proposal. Am Psychol. 1952;7(8):461-475. https://doi.org/10.1037/h0056631.

47. American Psychological Association. Technical recommendations for psychological tests and diagnostic techniques. Psychol Bull. 1954;51(2, Pt.2):1-38. https://doi.org/10.1037/h0053479. PMID: 13155713.

48. Cronbach LJ, Meehl PE. Construct validity in psychological tests. Psychol Bull. 1955;52(4):281-302. https://doi.org/10.1037/h0040957. PMID: 13245896.

49. American Educational Research Association, American Psychological Association, National Council on Measurement in Education. Standards for educational and psychological testing. Washington, DC: APA; 1974.

50. Chamorro IL, Davis ML, Green D, Kramer M. Development of an instrument to measure premature infant behavior and caretaker activities: time-sampling methodology. Nurs Res. 1973;22(4):300-309. https://doi.org/10.1097/00006199-197307000-00004. PMID: 4489647.

51. Feldman H. Validity of a feedback system for evaluation of pediatric nursing care. Nurs Res. 1965;14(3):257-261.

52. Rodgers B, Ferhott J, Cooper CL. A Screening Tool to Detect Psychosocial Adjustment of Children with Cystic Fibrosis. Nurs Res. 1974;23(5):420-425. https://doi.org/10.1097/00006199-197409000-00009.

53. Bergman R, Edelstein A, Rotenberg A, Melamed Y. Psychological tests: their use and validity in selecting candidates for schools of nursing in Israel. Int J Nurs Stud. 1974;11(2):85-109. https://doi.org/10.1016/0020-7489(74)90008-x. PMID: 4496609.

54. Johnson DM, Wilhite MJ. Reliability and Validity of Subjective Evaluation of Baccalaureate Program Nursing Students. Nurs Res. 1973;22(3):257-261. https://doi.org/10.1097/00006199-197305000-00012.

55. Mowbray JK, Taylor RG. Validity of Interest Inventories for the Prediction of Success in a School of Nursing. Nurs Res. 1967;16(1):78-80. https://doi.org/10.1097/00006199-196701610-00016.

56. Seither FG. A Predictive Validity Study of Screening Measures Used to Select Practical Nursing Students. Nurs Res. 1974;23(1):60-62. https://doi.org/10.1097/00006199-197401000-00013.

57. Yu Chao YM. [Validity and reliability of scoring performed nursing procedures]. Hu Li Za Zhi. 1974;21(4):50-60. Chinese. PMID: 4498580.

58. Zedeck S, Baker HT. Nursing performance as measured by behavioral expectation scales: A multitrait-multirater analysis. Organ Behav Hum Perform. 1972;7(3):457-466. https://doi.org/10.1016/0030-5073(72)90029-3.

59. Carlson CE, Vernon DTA. Measurement of informativeness of hospital staff members. Nurs Res. 1973;22(3):198-204. https://doi.org/10.1097/00006199-197305000-00002.

60. Munson FC, Heda SS. An Instrument for Measuring Nursing Satisfaction. Nurs Res. 1974;23(2):159-165. https://doi.org/10.1097/00006199-197403000-00013.

61. Cronbach LJ. Test validation. In: Thorndike R, editor. Educational measurement. 2nd ed. Washington, DC: American Council on Education; 1971. p. 443-507.

62. Messick S. The standard problem: Meaning and values in measurement and evaluation. Am Psychol. 1975;30(10):955-966. https://doi.org/10.1037/0003-066x.30.10.955.

63. Messick S. Test validity and the ethics of assessment. Am Psychol. 1980;35(11):1012-1027. https://doi.org/10.1037/0003-066x.35.11.1012.

64. Messick S. Meaning and Values in Test Validation: The Science and Ethics of Assessment. Educ Res. 1989;18(2):5-11. https://doi.org/10.3102/0013189x018002005.

65. Messick SJ. Validity. In: Linn RL, editor. Educational measurement. 3rd ed. New York: American Council on Education/Macmillan; 1989. p. 13-103.

66. Wolming S, Wikström C. The concept of validity in theory and practice. Assess Educ. 2010;17(2):117-132. https://doi.org/10.1080/09695941003693856.

67. Mehrens WA. The Consequences of Consequential Validity. Educational Measurement. 1997;16(2):16-18. https://doi.org/10.1111/j.1745-3992.1997.tb00588.x.

68. Popham WJ. Consequential validity: Right Concern‐Wrong Concept. Educational Measurement. 1997;16(2):9-13. https://doi.org/10.1111/j.1745-3992.1997.tb00586.x.

69. McDonald BR. Validation of Three Respiratory Nursing Diagnoses. Nurs Clin North Am. 1985;20(4):697-710. https://doi.org/10.1016/s0029-6465(22)01915-6.

70. Munns DC. A Validation of the Defining Characteristics of the Nursing Diagnosis “Potential for Violence”. Nurs Clin North Am. 1985;20(4):711-722. https://doi.org/10.1016/s0029-6465(22)01916-8. PMID: 3852301.

71. Pokorny BE. Validating a Diagnostic Label. Nurs Clin North Am. 1985;20(4):641-655. https://doi.org/10.1016/s0029-6465(22)01911-9.

72. Smith JK. Social reality as mind-dependent versus mind-independent and the interpretation of test validity. Journal of Research & Development in Education. 1985;19(1):1-9.

73. Vincent KG. The Validation of a Nursing Diagnosis. Nurs Clin North Am. 1985;20(4):631-640. https://doi.org/10.1016/s0029-6465(22)01910-7.

74. Voith AM, Smith DA. Validation of the Nursing Diagnosis of Urinary Retention. Nurs Clin North Am. 1985;20(4):723-729. https://doi.org/10.1016/s0029-6465(22)01917-x.

75. Baird SE. Development of a Nursing Assessment Tool to Diagnose Altered Body Image in Immobilized Patients. Orthop Nurs. 1985;4(1):47-54. https://doi.org/10.1097/00006416-198501000-00009. PMID: 3844713.

76. Gebbie KM, Lavin MA, editors. Classification of nursing diagnoses: proceedings of the first national conference. St. Louis: C.V. Mosby; 1975.

77. Feinstein AR. Clinical judgment. Baltimore: Williams & Wilkins; 1967.

78. Gordon M, Sweeney MA. Methodological Problems and Issues in Identifying and Standardizing Nursing Diagnoses. ANS Adv Nurs Sci. 1979;2(1):1-16. https://doi.org/10.1097/00012272-197910000-00002. PMID: 114094.

79. Farias JN, Nóbrega MML, Pérez VLAB, Coler MS. Diagnóstico de Enfermagem: uma abordagem conceitual e prática. João Pessoa: Santa Marta; 1990.

80. Hoskins LM. Clinical validation methodologies for nursing diagnosis research. In: Carroll-Johnson RM, editor. Classification of nursing diagnoses: proceedings of the eighth conference. Philadelphia: Lippincott; 1989. p. 126-131.

81. Fehring RJ. The Fehring model. In: Carroll-Johnson RM, Paquette M, editors. Classification of nursing diagnoses: proceedings of the tenth conference. Philadelphia: Lippincott; 1994. p. 55-62.

82. Kane MT. An argument-based approach to validity. Psychol Bull. 1992;112(3):527-535. https://doi.org/10.1037/0033-2909.112.3.527.

83. Kane MT. Validating the interpretations and uses of test scores. In: Lissitz RW, editor. The concept of validity. Charlotte: Information Age Publishing; 2009. p. 39-64.

84. Cizek GJ. Defining and distinguishing validity: Interpretations of score meaning and justifications of test use. Psychol Methods. 2012;17(1):31-43. https://doi.org/10.1037/a0026975. PMID: 22268761.

85. Newton PE. Clarifying the Consensus Definition of Validity. Measurement (Mahwah N J). 2012;10(1-2):1-29. https://doi.org/10.1080/15366367.2012.669666.

86. Chang BL, Kim MJ, Jones P, McFarlane E, editors. Proceedings of the Invitational Conference on Research Methods for Validating Nursing Diagnoses; 1989 Abril 28-30. Palm Springs, CA. St. Louis: NANDA; 1989.

87. Chang BL. Reliability and construct validity. In: Chang BL, Kim MJ, Jones P, McFarlane E, editors. Invitational Conference on Research Methods for Validating Nursing Diagnoses; 1989; Palm Springs. California: NANDA; 1989. p. 217-232.

88. Creason NS. Clinical validation of Nursing diagnoses. In: Chang BL, Kim MJ, Jones P, McFarlane E, editors. Invitational Conference on Research Methods for Validating Nursing Diagnoses; 1989; Palm Springs. California: NANDA; 1989. p. 278-304.

89. Padilla GV. Tool development: reliability and related statistics. In: Chang BL, Kim MJ, Jones P, McFarlane E, editors. Invitational Conference on Research Methods for Validating Nursing Diagnoses; 1989; Palm Springs. California: NANDA; 1989. p. 177-184.

90. Schroeder MA. Tool development: validity related to nursing diagnoses. In: Chang BL, Kim MJ, Jones P, McFarlane E, editors. Invitational Conference on Research Methods for Validating Nursing Diagnoses; 1989; Palm Springs. California: NANDA; 1989. p. 147-165.

91. Gordon M. Concept development: response to Knafl. In: Chang BL, Kim MJ, Jones P, McFarlane E, editors. Invitational Conference on Research Methods for Validating Nursing Diagnoses; 1989; Palm Springs. California: NANDA; 1989. p. 64-74.

92. Miller JF. Differentiating phenomenologic and ethnographic research approaches: response to Dreher. In: Chang BL, Kim MJ, Jones P, McFarlane E, editors. Invitational Conference on Research Methods for Validating Nursing Diagnoses; 1989; Palm Springs. California: NANDA; 1989. p. 98-113.

93. Strauss A, Corban JM. Grounded Theory’s applicability to nursing diagnostic research. In: Chang BL, Kim MJ, Jones P, McFarlane E, editors. Invitational Conference on Research Methods for Validating Nursing Diagnoses; 1989; Palm Springs. California: NANDA; 1989. p. 4-24.

94. Thomas MD. Grounded Theory and Nursing Diagnosis. In: Chang BL, Kim MJ, Jones P, McFarlane E, editors. Invitational Conference on Research Methods for Validating Nursing Diagnoses; 1989; Palm Springs. California: NANDA; 1989. p. 25-36.

95. Knafl KA. Concept development. In: Chang BL, Kim MJ, Jones P, McFarlane E, editors. Invitational Conference on Research Methods for Validating Nursing Diagnoses; 1989; Palm Springs. California: NANDA; 1989. p. 37-63.

96. Alexander C. Epidemiologic approaches to validation of nursing diagnoses. In: Chang BL, Kim MJ, Jones P, McFarlane E, editors. Invitational Conference on Research Methods for Validating Nursing Diagnoses; 1989; Palm Springs. California: NANDA; 1989. p. 121-136.

97. Fritzmaurice J. Tool development: validity related to nursing diagnoses – response to Schroeder. In: Chang BL, Kim MJ, Jones P, McFarlane E, editors. Invitational Conference on Research Methods for Validating Nursing Diagnoses; 1989; Palm Springs. California: NANDA; 1989. p. 166-176.

98. Oliveira-Kumakura AR de S, Caldeira S, Simão TP, Camargo-Figuera FA, Cruz D de ALM da, Carvalho EC de. The Contribution of the Rasch Model to the Clinical Validation of Nursing Diagnoses: Integrative Literature Review. Int J Nurs Knowl. 2018;29(2):89-96. https://doi.org/10.1111/2047-3095.12162. PMID: 27781407.

Editors:

Rosimere Ferreira Santana (ORCID: 0000-0002-4593-3715)

Geilsa Soraia Cavalcanti Valente (ORCID: 0000-0003-4488-4912)

Nuno Felix (ORCID: 0000-0002-0102-3023)

Corresponding author: Marcos Venícios de Oliveira Lopes (marcos@ufc.br)

Escola de Enfermagem Aurora de Afonso Costa – UFF

Rua Dr. Celestino, 74 – Centro, CEP: 24020-091 – Niterói, RJ, Brazil

Journal email: objn.cme@id.uff.br